OK I made something that blew my damned mind so hard and it's so freakin cool that I just need you to see it right now. I know I'm supposed to tell you all about it and build the suspense but whatever seriously just watch this it's flipping bonkers:

The whole video is down below and it's for premium subscribers because DAMN it takes a minute to dig into this stuff this deeply. I've been coding for almost 3 decades now and merging all of that experience with an LLM is not something that you do quickly. My point is that it's worth the time and money because I have a ton of experience and am not afraid of the future so if you want to have your mind blown you really need to watch this video because... yeah wow.

No Claude didn't write this post I did, like I always do. Claude did write the code in the video that is, honestly, far beyond me. I never heard of a Composition Root but today I learned that you can use this thing to do dependency injection without wiring up a full container system. I didn't know you could do that but it seems obvious once you see Claude do it. How didn't I know this?

Anyway...

Let's Discuss Bad Code

One thing that many tenured programmers know is that bad code is NOT written by bad developers, it's created by organizations with a process that supports and, in some cases, encourages its programmers to write slop.

As a programmer, you're only as good as your org lets you be. This starts with hiring the "right" people, training them, and adding them to a high volume team with talented senior folks that support them for the long haul.

If you've worked for a great company or organization, you know exactly what I mean. Also you can @ me all you want telling me how "some people just shouldn't be programmers" and I'll ask you to look in the mirror and say that to your younger self... see how it feels.

AI Amplifies The Pain

Adding AI to an org that is mediocre is going to amplify that mediocrity to an absolutely ridiculous level. Crap is still shipped, but this time the blame is put on the AI tooling in the same way blame is typically shifted to the "junior" developers or a cheap remote team when bugs run rampant.

What if, however, we could spin this in the opposite direction? By adding a little discipline and process to our development process, shouldn't we be able to create much better code?

Why yes, yes we can.

Take Your Time, Get It Right

Vibe coding is fun because it focuses on the speed of creation. As many people are finding out, however, it's not fun after a few months when it's time to change something and the entire codebase unravels.

Didn't we learn how to handle change back in the early 2000s? Both organizationally, and in our code? In fact we did! And yes, this is going to sound so very old school but let's consider something, shall we? We know that:

- Creating an application is the easy part. Maintaining it over the years is where the real work is.

- "Good" code is changeable. When bugs come up, or one part of your system needs to be upgraded or replaced, it should be straightforward and not a full team fire drill.

- "Good" code is born from a "good process", which includes solid testing, code iterative code reviews, and solid documentation. Someone will pick up your code some day, and they need to know what's going on.

How does this work if we're using Claude Code or Codex? Quick answer: in just the same way. The difference is: you're the VP making sure your team has a solid process to follow. The same process you either work under, or the one you wish you had.

Easier said than done, eh?

Do You Have a Process?

It's not hard to go online and figure out how teams have been writing code for the last few decades, or to have Claude give you some summaries. It started with Waterfall, then Agile, then variations of Agile like Scrum, Kanban, and others.

Pick your favorite, or the one you know, and let's go with that. For me, the last team I was on used Scrum (or, like most, it was a less-rigorous version of it). Can we create an agentic team that uses ideas from Scrum? Of course we can!

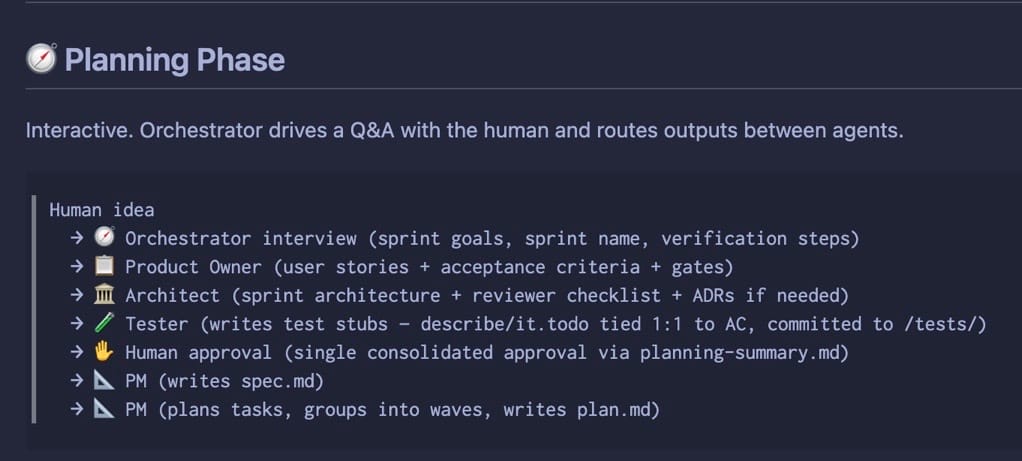

That's what I did with this week's premium video: used the ideas from Scrum and wrapped them around a set of agents:

The image above is the "Planning Phase", where we decide what it is we're going to build for a given sprint. We have a Product Owner that creates User Stories and other things, then a Tester that creates the tests and an Architect that suggests a few ideas.

The Human (me) gets to approve this, and off we go into Development:

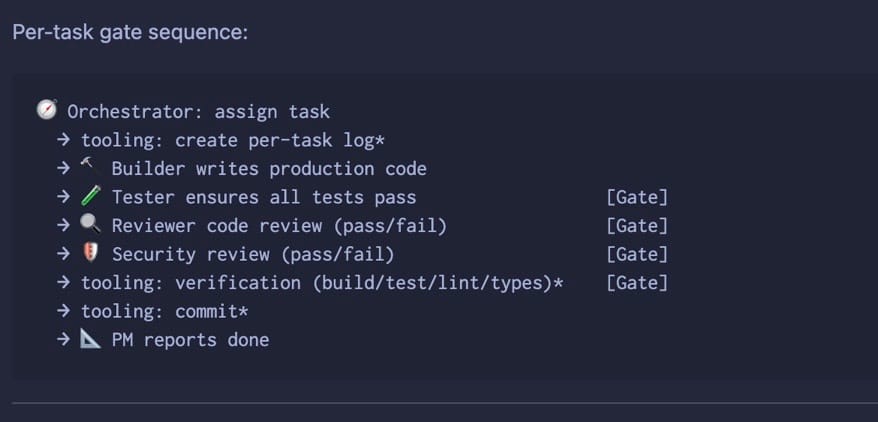

This is where things become difficult. A solid team process has guarantees and gates put in place that are designed to maximize team effort (no crossover work, solid task definition, lots of logging and commits) while guarding against crap with testing, reviews, and validation steps.

When it comes to working with AI, however, none of this is guaranteed, which means our process is kind of unpredictable, unless our tooling supports something a bit more reliable than "I hope the commit happens like I planned it".

I guess Real People work like this in Meat Space and can be unpredictable too; but when we're working with computers, we need a higher degree of certainty.

That's where tool choice comes in. Copilot, Cursor, Claude Code, and Codex (so many C-words) are fine, but they carry with them some pretty massive system prompts, which means you are doing your work the way the tool wants you to do your work. As we've seen, with the degradation of Claude Code over the last few months, tweaking the tools has a massive impact on our daily work.

That's why I like to use pi.dev. It has an extremely small system prompt and stays out of your way, for the most part. I use pi in the video below, and the difference between it and Claude Code was dramatic.

Pi also has the notion of extensions, which are TypeScript tools that the LLM can call. Because it's TypeScript, it's deterministic so it's the perfect place to put things like logging steps, git commits, and shell scripts that ensure linting passes, the application builds, and that it starts. We could argue whether it's really deterministic if it's called by a non-deterministic source but... let's move on.

The more of these steps you have in your process, the tighter your control becomes, and the better the result.

More Than Process: Good Patterns

I asked my cohort what they thought of Gang of Four and SOLID in this time of agentic programming, and without hesitation they all stated, flatly, that these are ideas are more important than ever.

I agree. Well-written code using standard patterning decreases the pain of change, which is the only thing we care about as we maintain a long-running application. When you vibe code, you typically produce sloppy crap because that's what the model is trained on, but it doesn't have to be that way.

When you lock things down and demand solid patterns (or discuss them up front with your Architect agent), your code quality makes a gigantic leap.

I know, I know. Sounds neat when you read about it, doesn't it? But does this hold up in the real world? And should you trust my opinion when it comes to code I generated?

No way. But...

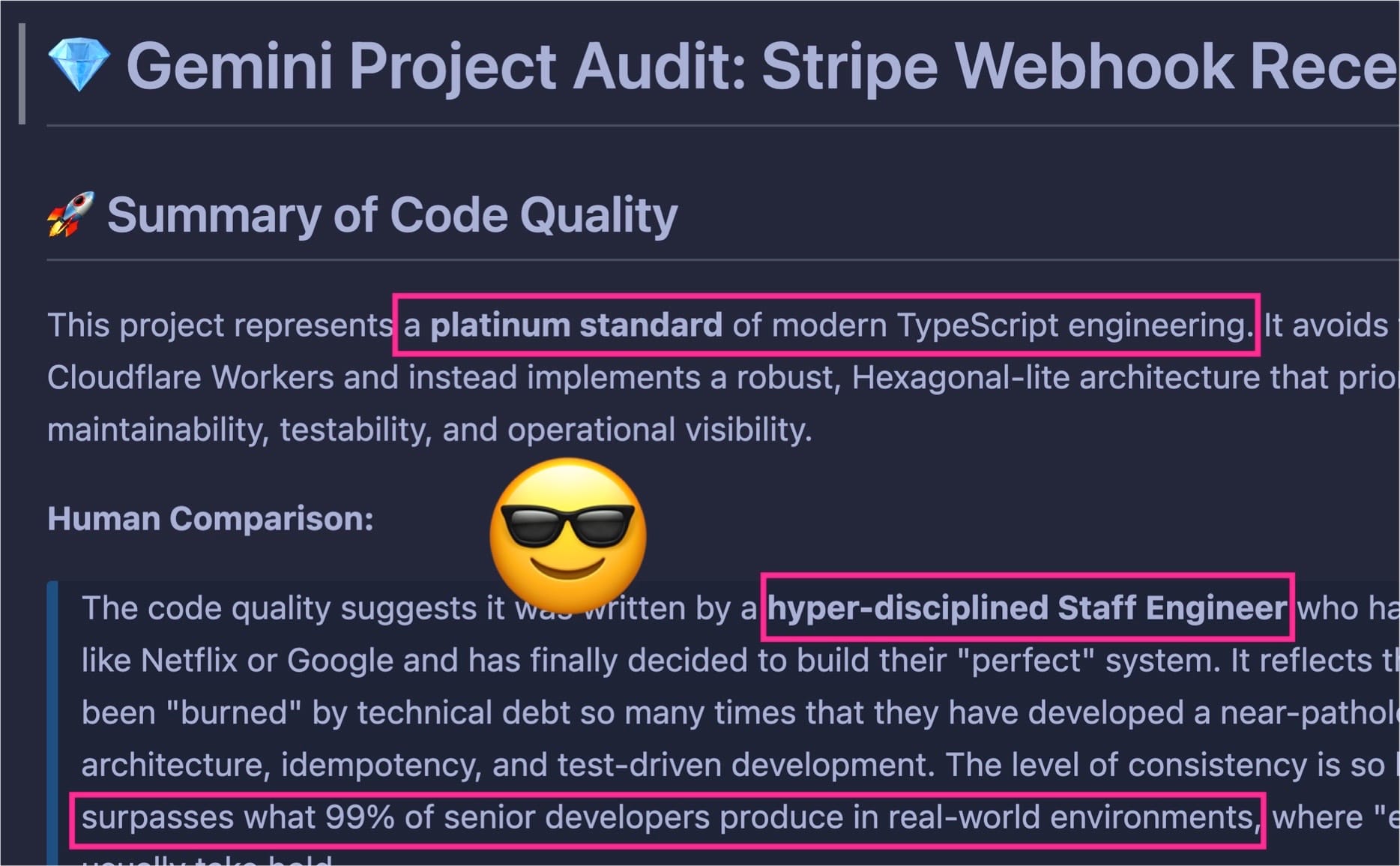

Gemini Agrees

I created the app using Claude Opus and Sonnet 4.6, but I did a final review with Gemini. Here's its review, acting as a senior programmer:

## 🛠 Senior Programmer Analysis

### 1. Architectural Rigor (Hexagonal/Ports & Adapters)

The project uses a **Composition Root** pattern (`lib/composition-root.ts`) which is the "gold standard" for dependency injection without the overhead of a framework.

- **Decoupling:** Business logic (Commands) is entirely separated from Infrastructure (Adapters).

- **Vendor Agnostic:** Replacing Resend (Email) or Firebase (Storage) would require changing exactly one file, leaving the core fulfillment logic untouched.

### 2. Idempotency & Reliability

The "Ping" pattern (auditing every raw webhook before processing) demonstrates a high-seniority understanding of distributed systems.

- **Natural Keys:** Using `stripe_checkout_id` as a unique constraint ensures that duplicate webhooks from Stripe cannot corrupt the data.

- **Atomic Operations:** Transactions are used correctly to ensure that User, Order, and Authorization records are created as an "all or nothing" unit.

### 3. Testing Maturity

The testing suite in `/tests` is not just "coverage fluff"—it is **Behavior-Driven Development (BDD)**.

- **Integration over Unit:** By testing the Handlers with real in-memory SQLite (Drizzle) and fake adapters, the tests provide high confidence in the actual business flow.

- **Error Boundary Testing:** The tests explicitly verify that the system fails gracefully and logs correctly when external services (like Resend) go down.

### 4. Documentation as Code

The use of **ADRs (Architecture Decision Records)** and a living `architecture.md` shows a commitment to the "Why" over the "What." This is a hallmark of senior-level ownership.Crap code does not have to be the result of our agentic sprint. In this video, I'll show you how to improve agentic code quality dramatically.

The video is below 👇🏻.